01

Start with the premise and then distrust it

The premise sounds tidy. Several languages share Devanagari script. Build a shared challenge around that common surface. The trouble starts once you ask what is common in anything beyond appearance. Script is shared. Difficulty is not. Annotation demands are not. Social interpretation is definitely not.

That is why I still think this paper works best when read as three neighboring studies rather than one single modeling story.

What I like about that reading is that it prevents false neatness. It keeps us from saying one benchmark, one solution, one takeaway when the task itself is clearly resisting that simplification.

02

Subtask A is the clean one

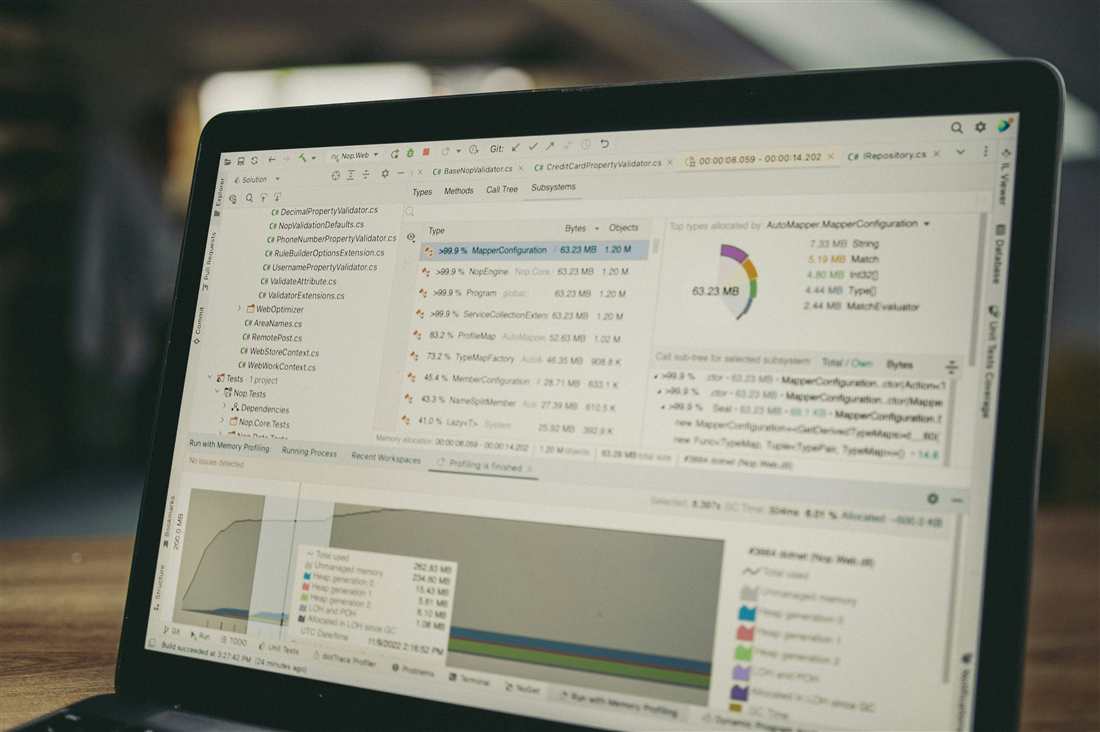

Language identification across Nepali, Marathi, Sanskrit, Bhojpuri, and Hindi is comparatively structured. The training set is large, the labels are stable, and the question is primarily discriminative. The final ensemble with IndicBERT V2 fallback reaches F1 = 0.9980.

I resist the temptation to call that solved in any universal sense, but I do think Subtask A is the place where the benchmark is least ambiguous.

That clarity matters because it gives a reference point. It shows what success looks like when the problem is mostly discriminative and the label boundary is comparatively stable.

03

Subtask B is where the benchmark becomes less forgiving

Hate speech detection in Hindi and Nepali introduces a different kind of difficulty. Now the model is not simply distinguishing languages. It is making a judgment under class imbalance. The training distribution leans heavily toward non-hate examples.

That is why focal loss mattered here. With L_focal = -(1 - p_t)^gamma log(p_t), the loss stops rewarding easy majority examples so generously.

The final ensemble reaches F1 = 0.7652, recall = 0.7441, and precision = 0.7925. Those are useful numbers, and they fit the difficulty of the task better than a cleaner success story would.

To me, Subtask B is where the benchmark stops being about shared script and starts becoming about social judgment under imperfect evidence. That is a very different modeling burden.

04

Subtask C is where the semantic burden fully arrives

Target detection is the one I keep coming back to. Here the model must identify who is being attacked, whether hateful content exists, and how those cues are expressed through implication, social context, slang, and code-mixing.

The final chosen system for this task, Gemma-2 27B with ORPO, reaches F1 = 0.6804. I do not read that lower score as a disappointment. I read it as the benchmark finally exposing where interpretation becomes unavoidable.

This is the subtask that keeps the paper from collapsing into a comfortable multilingual success story. It makes the benchmark admit that shared script is a shallow commonality once the model has to identify socially implied targets.

05

Why the benchmark earns its complexity

This benchmark earns its complexity because it forces precision into the multilingual conversation. Shared region is not enough. Shared script is not enough. Once the task shifts from recognition to social interpretation, the benchmark stops being one problem even if the layout still looks unified.

That is the real value of the paper for me. It makes the split visible instead of smoothing it away. It shows where a multilingual story is truly shared and where it only looks shared from a distance.

That is also why this benchmark belongs in broader conversations about evaluation. It reminds us that benchmark design can compress differences that the model later has to recover the hard way.

06

What I would not let a future benchmark blur away

If I build on this kind of benchmark again, I would keep one rule in front of me: shared script is not a license to flatten different tasks into one story. The moment a benchmark hides those differences, the model starts getting credit for coherence it never really earned.

// Closing Thought

Shared script can make a benchmark look unified long after the real difficulty has split apart. I would rather the evaluation admit that early.